AI governance is not a policy problem. It’s a visibility problem.

Most enterprises are approaching it from the outside in: writing acceptable use policies, issuing guidelines, and hoping employees comply. That approach fails because it operates on assumption rather than evidence. You cannot enforce what you cannot see and most organizations have no reliable way to see what AI tools are actually running inside their environment.

The numbers back this up.

86% of organizations lack visibility into how data flows to and from AI tools, and nearly 98% report unsanctioned AI use inside their environments. Meanwhile, only 37% have AI governance policies in place meaning the majority are running blind, hoping nothing surfaces in an audit, a breach notification, or a regulator’s inbox. With the EU AI Act at full enforcement in August and others to follow, that window is closing fast.

Today, we’re closing the visibility gap.

Why the problem is bigger than most teams realize

The first thing most security teams discover when they actually look is that their AI exposure is far broader than they expected. It isn’t just employees opening ChatGPT. AI capabilities are embedded across the entire SaaS stack; in productivity suites, design tools, communication platforms, and countless third-party applications. Canva has AI. Slack has AI. Microsoft 365 has AI. Most of it is on by default, and in many cases employees are consuming it without realizing they’re exposing the organization to risk.

That’s before you account for the tools employees are actively choosing. Gartner estimates that 69% of organizations suspect employees are using prohibited GenAI tools; which means the majority are working off suspicion rather than data.

And even among organizations that have already invested in governance tooling, the visibility gap persists. Here’s why.

The three phases of a real governance program

Before getting into what’s shipping today, it helps to frame where these capabilities sit in a broader governance model, because the organizations that use them most effectively aren’t treating this as a one-time implementation. They’re building a program.

Real AI governance runs in three phases.

Phase 1 is inventory: before you can govern anything, you need to know what’s there. Not just the tools IT approved, but every AI application running in your environment, including the ones nobody sanctioned. This is your actual AI footprint. It exists whether you’ve mapped it or not.

Phase 2 is rationalization: once you have the inventory, you can classify tools by risk, map AI usage to actual workflows by department, and build governance policies grounded in real behavioral data rather than assumptions. This is where policy becomes credible because it’s based on what people are actually doing.

Phase 3 is enforcement: continuous monitoring, real-time alerts when a violation occurs, endpoint-level blocking of non-compliant tools, and audit-ready reporting that holds up under regulatory scrutiny. This is what makes governance a living control rather than a document.

Most organizations we talk to are somewhere between Phase 1 and Phase 2. They have partial inventory and no enforcement. The capabilities we’re releasing today are designed to move you forward on both fronts.

What’s New

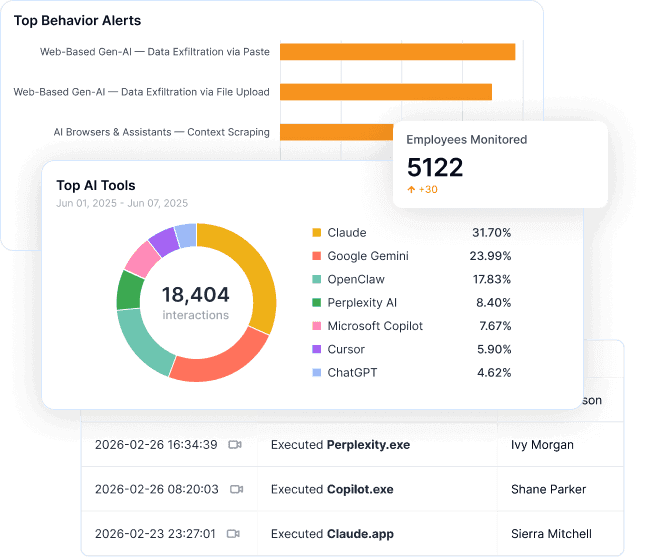

Three new AI Governance dashboards are available in your Teramind environment starting today.

AI Conversations and Application Usage An evolution of the LLM Dashboard with refined visualizations, this gives you a complete, real-time picture of what AI tools are in use across your environment, by whom, how frequently, and across which teams. That includes installed apps, browser-based tools, and AI features embedded inside SaaS products your security team may not be actively watching.

AI Data Exfiltration The enforcement capability your DLP program doesn’t have yet. The exfiltration path doesn’t require a sophisticated attacker, it’s an employee pasting a contract into a prompt window. We’re able to detect sensitive data movement into AI tools before it’s out of your control.

Agentic AI The fastest-moving part of the threat surface, and the one most governance conversations haven’t caught up with yet. Gartner predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from under 5% in 2025. Agents don’t just respond to queries; they take actions, connect to systems, and operate on your infrastructure with varying degrees of human oversight. Teramind gives you visibility into what autonomous agents are actually doing inside your environment, which is a category that simply didn’t have an answer before now.

How we’re rolling it out

The behavioral rules powering AI Data Exfiltration and Agentic AI ship disabled by default. This was deliberate. Governance has to move at the pace your organization is ready for, not ours.

A licensing note: the behavioral rules behind AI Data Exfiltration and Agentic AI require a UAM or higher tier subscription. If you’re on that tier your success team can walk you through everything before you switch anything on.

Why this matters now

Shadow AI breaches averaged 247 days to detect in IBM’s most recent findings. That’s not a technology failure. It’s a visibility failure. Organizations can’t investigate what they can’t see, and they can’t govern what they’ve never defined.

A policy without visibility is unenforceable. It’s a document. The organizations that successfully govern AI usage aren’t the ones with the most comprehensive policy documents, they’re the ones with operational visibility into what’s actually happening, and the ability to act on it.

The three dashboards we’re releasing today won’t complete your AI governance program. But they give you what every governance program eventually needs first: a clear, auditable, real-time picture of what AI is doing inside your environment, at the layer where it actually counts.

Want to see what these capabilities look like in your environment?